Tuning DataStax Java Driver for Cassandra (Part 2)

|

Sep 20, 2020

In first part of this blog post series we covered basic settings which can give you few fast wins when tuning performance. We explained how you can tune pooling options to send more parallel request over the wire and we explained how you can decrease socket timeout to get fail fast scenario, where you can handle this failures before they create bottleneck for your application.

In this part we will concentrate on some more advanced features, speculative executions let driver issue parallel request if some threshold is not reached and latency aware load balancing policy measures and penalize slow performing nodes and leverages nodes with good performance.

Speculative executions

If you have a hard latency threshold and have some room to make additional requests to your cluster, you might try this driver option. It is off by default. You can enable this by adding the implementation of SpeculativeExecutionPolicy to Cluster like this:

SpeculativeExecutionPolicy mySpeculativeExecutionPolicy = new ConstantSpeculativeExecutionPolicy(50,2); Cluster.builder().addContactPoints(nodes) .withSpeculativeExecutionPolicy(myPolicy).build();

Speculative Execution Policy

In the above example we are telling the driver to wait for the first request for 50 milliseconds (the first parameter in policy construction) and, if it does not receive the request, to issue an additional request to the next available host. The second parameter is telling the driver the maximum number of attempts, excluding the initial one. So, in our example, we will issue a maximum of 3 requests. When the first request successfully returns it will cancel the other ongoing requests for this session id. This means that, if after 50 milliseconds the driver does not get a response it will contact the next available host, but if it gets a response from the first host after, let’s say 75ms, it will cancel the ongoing request to the second host.

In addition to the explained ConstantSpeculativeExecutionPolicy there is one more, PercentileSpeculativeExecutionPolicy which is more complicated, and it is constructed like this:

SpeculativeExecutionPolicy mySpeculativeExecutionPolicy = new PercentileSpeculativeExecutionPolicy(latencyTracker, 99, 2);

Percentile Speculative Execution Policy

As you can see, you must provide a latency tracker which it will use to store latencies over a sliding time window interval. DataStax driver does not provide its own, so you must make sure that you have HdrHistogram in dependencies when you want to use this policy. So, latencies are stored over a time window as read-only in memory structure, and by the second parameter (99 meaning 99.9% of all the requests) you are telling this policy when to try additional requests. So a request is considered slow in our example when it falls outside of the 99% margin of all the requests stored in HdrHistogram over some time period and at most 2 additional requests will be issued (the third parameter in constructor).

A couple of important guidelines if you want to use this policy. It is lowering down the overall latency but it is adding additional requests to your application. If you have many client applications connecting to your cluster this can be significant, even though it can backfire if you put too much stress to the cluster. Monitoring is your friend here, check the DataStax documentation how you can monitor a number of speculative retries for each request, and be sure to add this to your monitoring system before you roll this out. Also, monitor closely the network traffic between the application and cluster, and thread state, since you have limits on concurrent readers and writers as well. Driver 3.x brought an idempotency flag for all outgoing requests, which is important here, if your request is not marked as idempotent it would not be tried again. Make sure to mark all the requests that you want to be handled by speculative execution policy as idempotent. And as the last thing, adjust this policy to your needs if neither percentile based and constant solve your problem. It is interface provided by the driver so you can customize it based on your needs.

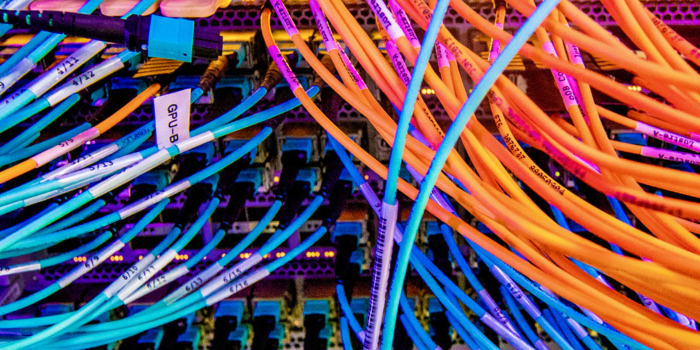

LatencyAware load balancing policy

This was a sweet spot for us, especially for AWS deployment with nodes using EBS volumes. LatencyAwarePolicy is a load balancing policy which you can put on top of any default policy (i.e. DCRoundRobin or TokenAware) and it will filter out slow nodes. It uses the same trick as percentile based speculative execution policy where it measures the performance of requests to certain hosts over a time window. Based on these measurements and averages from different hosts it penalizes hosts with bad performance, where bad is defined as within the boundaries of average performance of other nodes. It is highly tunable but you must know what you are doing and what you want to achieve before you start going down this road. Usually people give up really fast and say that they obtain better results with TokenAware policy.

For us, it provided pretty good results since it lowered down the impact of infrastructure. AWS EBS volumes are a good choice these days, since they improved a lot and they give you persistence across reboot and snapshots. But they have peaks, and in order to cope with this LatencyAware load balancing policy on driver level can be your friend. If you tune it right it will stop using the EBS volume with latency and start routing traffic to other nodes. However, you should make sure that you have a proper number of replicas holding the data to choose from, since this load balancing policy can easily create hotspots. More on specific settings based on our use case in the next blog post but do try it especially if you have infrastructure deployed on AWS and you use EBS volumes for data storage on nodes.

Conclusion

There are many options from the application perspective where you can improve things based on your use case. Some things can be done alone on application level (like load balancing policy) and some must be coordinated with changes on cluster level (like socket timeouts). Make sure to fully understand what you are doing before changes, and make sure to have proper monitoring and baseline before you make any of changes. No improvement comes without cost so understand the driver and your use case and make changes which best fit your application needs. In the next blog post we will give our use case and explain each setting we made for that particular use case.